项目背景与目标

在机器学习项目中,数据质量至关重要。自然语言处理(NLP)项目通常需要处理人类书写的文本,而拼写错误是常见问题。例如,从社交媒体或论坛收集的数据集可能包含大量拼写错误,这会影响模型的训练效果。因此,构建一个拼写检查器是一个有价值的项目,有助于提升数据质量。

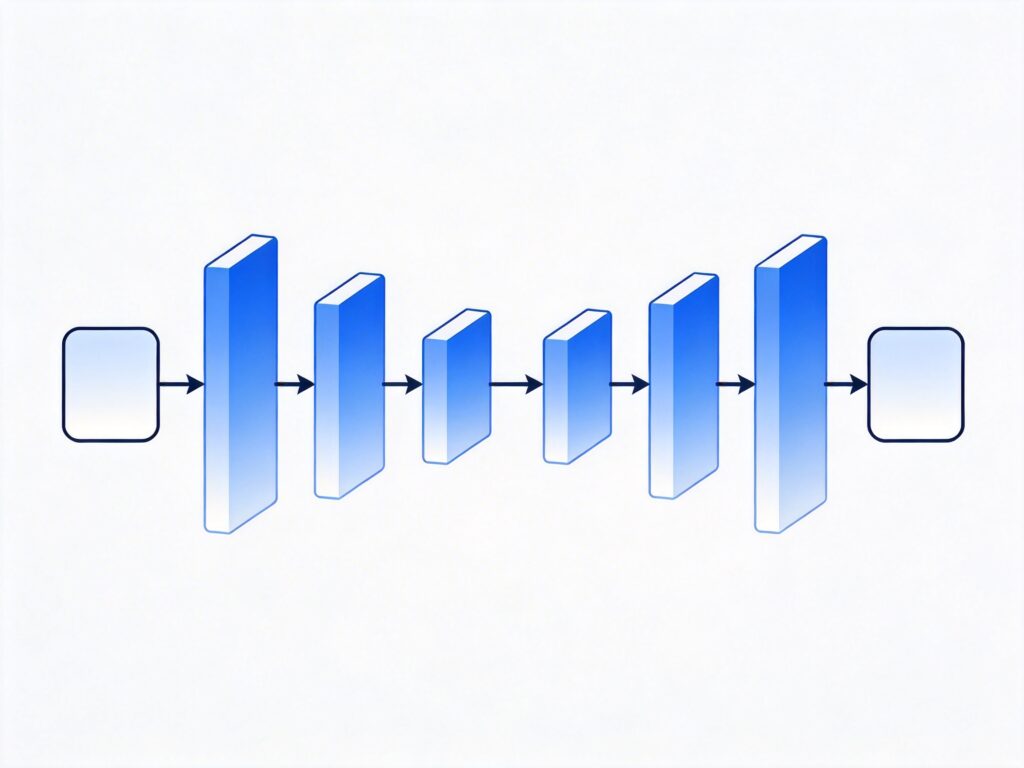

本项目将使用序列到序列(seq2seq)模型,并利用 TensorFlow 实现。我们将重点介绍如何为模型准备数据,并讨论模型的一些功能。项目使用 Python 3 和 TensorFlow 1.x 版本(请注意,当前 TensorFlow 2.x 已广泛使用,但核心概念相通)。数据源来自古腾堡项目的二十本流行书籍,如需提高模型准确性,可以扩展使用更多书籍。

完整代码可在 GitHub 查看:https://github.com/Currie32/Spell-Checker

模型能力预览

以下是一些模型纠正拼写错误的示例:

- 输入: Spellin is difficult, whch is wyh you need to study everyday.

输出: Spelling is difficult, which is why you need to study everyday. - 输入: The first days of her existence in th country were vrey hard for Dolly.

输出: The first days of her existence in the country were very hard for Dolly. - 输入: Thi is really something impressiv thaat we should look into right away!

输出: This is really something impressive that we should look into right away!

数据加载与预处理

加载书籍文本

首先,将所有书籍文件放在名为“books”的文件夹中。以下是加载单本书籍的函数:

def load_book(path):

input_file = os.path.join(path)

with open(input_file) as f:

book = f.read()

return book获取书籍文件列表:

path = './books/'

book_files = [f for f in listdir(path) if isfile(join(path, f))]

book_files = book_files[1:]将所有书籍文本加载到列表中:

books = []

for book in book_files:

books.append(load_book(path+book))如需查看每本书的单词数,可使用以下代码:

for i in range(len(books)):

print("There are {} words in {}.".format(len(books[i].split()), book_files[i]))注意: 如果代码中不使用 .split(),则返回的是字符数而非单词数。

文本清洗

由于模型以字符为输入,我们无需进行词干提取或去除停用词,只需移除不想要的字符和多余空格:

def clean_text(text):

'''Remove unwanted characters and extra spaces from the text'''

text = re.sub(r'n', ' ', text)

text = re.sub(r'[{}@_*>()\#%+=[]]','', text)

text = re.sub('a0','', text)

text = re.sub("'92t","'t", text)

text = re.sub("'92s","'s", text)

text = re.sub("'92m","'m", text)

text = re.sub("'92ll","'ll", text)

text = re.sub("'91",'', text)

text = re.sub("'92",'', text)

text = re.sub("'93",'', text)

text = re.sub("'94",'', text)

text = re.sub('.','. ', text)

text = re.sub('!','! ', text)

text = re.sub('?','? ', text)

text = re.sub(' +',' ', text) # Removes extra spaces

return text词汇表构建

词汇表构建是标准步骤,具体代码可在 GitHub 找到。模型输入包含的字符如下(共78个):

[' ', '!', '"', '$', '&', "'", ',', '-', '.', '/', '0', '1', '2', '3', '4', '5', '6', '7', '8', '9', ':', ';', '', '', '', '?', 'A', 'B', 'C', 'D', 'E', 'F', 'G', 'H', 'I', 'J', 'K', 'L', 'M', 'N', 'O', 'P', 'Q', 'R', 'S', 'T', 'U', 'V', 'W', 'X', 'Y', 'Z', 'a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'j', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r', 's', 't', 'u', 'v', 'w', 'x', 'y', 'z'] 可以进一步删除特殊字符或统一转为小写,但为了保持拼写检查器的实用性,我们保留了当前字符集。

句子分割与处理

数据在输入模型前需组织成句子。我们以句点加空格(“. ”)为分隔符进行分割。但需注意,有些句子以问号或感叹号结尾,这可能导致分割问题。不过,只要合并后的句子长度不超过最大限制,模型仍能处理。

示例:

- Today is a lovely day. I want to go to the beach. (将被拆分为两个句子)

- Is today a lovely day? I want to go to the beach. (将作为一个长句子处理)

分割代码如下:

sentences = []

for book in clean_books:

for sentence in book.split('. '):

sentences.append(sentence + '.')句子长度筛选

为控制训练时间,我们筛选长度在指定范围内的句子:

max_length = 92

min_length = 10

good_sentences = []

for sentence in int_sentences:

if len(sentence) <= max_length and len(sentence) >= min_length:

good_sentences.append(sentence)数据集划分

将数据划分为训练集和测试集,测试集占比15%:

training, testing = train_test_split(good_sentences,

test_size = 0.15,

random_state = 2)按长度排序

按句子长度排序可以提升训练效率,因为同一批次内的句子长度相近,所需填充较少:

training_sorted = []

testing_sorted = []

for i in range(min_length, max_length+1):

for sentence in training:

if len(sentence) == i:

training_sorted.append(sentence)

for sentence in testing:

if len(sentence) == i:

testing_sorted.append(sentence)生成含错误的训练数据

本项目的一个关键部分是生成含有拼写错误的句子作为模型输入。错误类型包括:

- 交换相邻两个字符(例如:hlelo → hello)

- 插入一个额外字母(例如:heljlo → hello)

- 删除一个字符(例如:helo → hello)

每种错误发生的概率相等,且每个字符有5%的概率发生错误。平均每20个字符会出现一个错误。

定义字母列表:

letters = ['a','b','c','d','e','f','g','h','i','j','k','l','m',

'n','o','p','q','r','s','t','u','v','w','x','y','z',]错误生成函数:

def noise_maker(sentence, threshold):

noisy_sentence = []

i = 0

while i < len(sentence):

random = np.random.uniform(0,1,1)

if random < threshold:

noisy_sentence.append(sentence[i])

else:

new_random = np.random.uniform(0,1,1)

if new_random > 0.67:

if i == (len(sentence) - 1):

continue

else:

noisy_sentence.append(sentence[i+1])

noisy_sentence.append(sentence[i])

i += 1

elif new_random < 0.33:

random_letter = np.random.choice(letters, 1)[0]

noisy_sentence.append(vocab_to_int[random_letter])

noisy_sentence.append(sentence[i])

else:

pass

i += 1

return noisy_sentence批次数据生成

与许多项目不同,我们在训练过程中动态生成输入数据。每个批次的目标句子(正确句子)都会通过 noise_maker 函数生成新的含错误输入。这种方法极大地扩展了训练数据的多样性。

def get_batches(sentences, batch_size, threshold):

for batch_i in range(0, len(sentences)//batch_size):

start_i = batch_i * batch_size

sentences_batch = sentences[start_i:start_i + batch_size]

sentences_batch_noisy = []

for sentence in sentences_batch:

sentences_batch_noisy.append(

noise_maker(sentence, threshold))

sentences_batch_eos = []

for sentence in sentences_batch:

sentence.append(vocab_to_int[''])

sentences_batch_eos.append(sentence)

pad_sentences_batch = np.array(

pad_sentence_batch(sentences_batch_eos))

pad_sentences_noisy_batch = np.array(

pad_sentence_batch(sentences_batch_noisy))

pad_sentences_lengths = []

for sentence in pad_sentences_batch:

pad_sentences_lengths.append(len(sentence))

pad_sentences_noisy_lengths = []

for sentence in pad_sentences_noisy_batch:

pad_sentences_noisy_lengths.append(len(sentence))

yield (pad_sentences_noisy_batch,

pad_sentences_batch,

pad_sentences_noisy_lengths,

pad_sentences_lengths) 总结与展望

本项目展示了一个基于 seq2seq 模型的拼写检查器实现。虽然结果令人鼓舞,但模型仍有改进空间,例如使用更先进的架构(如 CNN 或 Transformer)或扩展训练数据。欢迎社区贡献改进想法或代码。

希望本文能帮助你理解如何使用 TensorFlow 构建拼写检查模型。如有疑问或建议,欢迎讨论。